For two decades, the prevailing strategy for distributing developer infrastructure products revolved around a consistent, albeit labor-intensive, model: secure initial SDK installation within a single team, then leverage that foothold for broader organizational adoption. The primary hurdle in this process was invariably "developer bandwidth"—the effort required to persuade an engineer to integrate a new dependency, configure credentials, and implement the initial API call. However, the emergence of AI coding tools such as Cursor and Claude Code is rapidly dismantling this long-standing bottleneck. With AI now contributing to an estimated 4% of all public GitHub commits, a figure that is escalating swiftly, developer infrastructure companies face a critical imperative: adapt their products for AI coding agents, not as a mere enhancement, but as a fundamental competitive necessity.

The Legacy Distribution Playbook: Friction and the Limits of PLG

Historically, leading developer tools companies recognized that successful distribution was less about sales tactics and more about friction reduction. The paradigm was to minimize the initial effort required for integration. This philosophy underpinned the success of services like Stripe, whose seven-line integration became legendary, Twilio’s straightforward copy-paste quickstarts, and Datadog’s simple one-command agent installation. The entire Product-Led Growth (PLG) playbook was an optimization around this core insight: create a magical first five minutes, and word-of-mouth would drive widespread adoption.

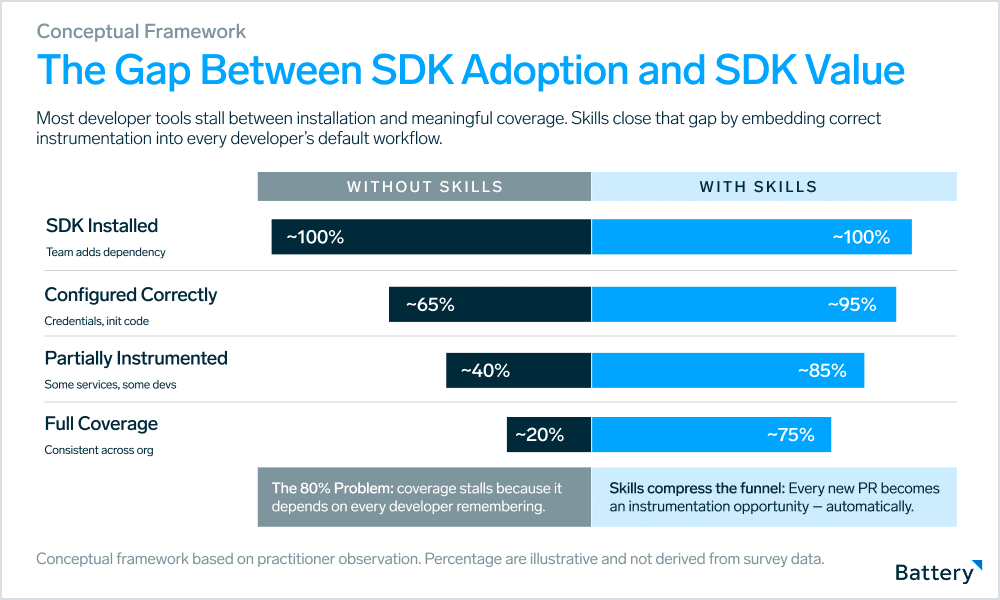

Yet, PLG, while effective at initial adoption, never fully addressed the second, more complex distribution challenge: expansion within an organization. While getting an SDK installed was the first step, ensuring its correct and consistent instrumentation across every service, feature, and team represented the unglamorous, ongoing work that determined whether a tool delivered its promised value or remained at a suboptimal 20% coverage.

Sudhee Chilappagari, reflecting on his experience as a Product Manager at Segment, highlighted these twin challenges. "The first was purely a developer-bandwidth problem," he noted. "PMs and marketers had to beg, borrow, and steal engineering time to get Segment implemented. We solved it by making analytics.js dead simple to install." The second challenge, a "best-practices problem," required a more robust solution. Segment addressed this by developing "Protocols," a tracking plan product designed to enforce a "plan first, track effectively later" discipline. They further innovated with "Typewriter," a type-safety plugin that auto-completed event code, reducing the need for developers to memorize intricate schemas. While these initiatives were significant and effective, they demanded the creation of entire product lines, dedicated engineering teams, and sustained investment to bridge the gap between mere installation and comprehensive, effective instrumentation.

This illustrates a crucial point: even for a company like Segment, which strategically prioritized solving this problem, the investment was substantial. In many other cases, tools remain at partial coverage not due to a lack of capability, but due to human inconsistency. Consequently, their full potential value is rarely realized.

The Agentic Development Paradigm Shift

AI coding agents, including but not limited to Claude Code and Cursor, are rapidly becoming the primary interface for developers writing and modifying code. This is not a marginal shift; it represents a fundamental alteration in workflow. When a developer’s default approach becomes "describe what I want, review the code," the AI agent acts as a powerful intermediary between developer intent and the actual codebase. This intermediary, crucially, is programmable.

With agents like Cursor and Claude Code, the initial hurdle of developer bandwidth effectively dissolves. As AI generates the code, the excuse of "we can’t get engineering cycles" becomes obsolete. The true unlock lies in the ability to impart the expertise of a company’s top solutions engineer onto these agents. The critical question becomes: can an AI agent understand a customer’s industry, Ideal Customer Profile (ICP), goals, and desired outcomes well enough to properly instrument an SDK across an entire application?

If the answer is yes, the implication is profound: it signifies the availability of a "10x solutions engineer" for every customer account—an agent that works on every pull request, never takes a vacation, and never forgets naming conventions.

This capability is made possible by "agent skills." These are compact, installable context packages designed to educate AI coding agents on how a specific tool functions, what patterns to follow, and what pitfalls to avoid. With a single command, every interaction an agent has with a codebase is imbued with deep, opinionated knowledge of a particular SDK. This represents a fundamentally new distribution surface, distinct from anything that existed previously.

The Compounding Effect Within Organizations

The value proposition of many infrastructure products inherently scales with their coverage, and historically, coverage has been constrained by developer memory and discipline rather than technical limitations. Agent skills effectively shatter this constraint.

Consider observability tools. OpenTelemetry (OTel) is seeing widespread adoption across metrics, logs, and traces. However, OTel’s true value is amplified by comprehensive coverage: the more of a stack that is instrumented, the more coherent the traces and the more effective the debugging becomes. Achieving this coverage requires every developer, on every new service and endpoint, to consistently remember to add spans, propagate context, attach the correct attributes, and configure the appropriate exporter. This is not a technical challenge; it is a human memory challenge.

A well-designed OTel agent skill fundamentally alters this default behavior. The agent, equipped with the skill, understands that any new HTTP handler written necessitates instrumentation, any database call added requires wrapping, and any service boundary crossed demands context propagation. The developer is freed from the burden of remembering; the agent handles it.

This impact extends beyond mere adoption metrics. For numerous developer infrastructure products that employ usage-based pricing—such as per-span volume, tracked events, monthly active users, or workflow executions—the depth of coverage directly correlates with revenue. An account with only 20% instrumentation is generating roughly 20% of its potential billing. Agent skills bridge this gap without requiring the acquisition of new customers. Each pull request an agent instruments translates into incremental Annual Recurring Revenue (ARR) that previously necessitated a dedicated sales effort.

This principle applies across a wide spectrum of developer infrastructure categories:

-

Product Analytics (e.g., Pendo, Segment, Amplitude): While installation is typically straightforward, deriving value hinges on tagging every meaningful user interaction with precise event names, properties, and user context. This is an ongoing instrumentation task spread across all frontend developers. An agent skill that understands an organization’s event taxonomy transforms sporadic coverage into comprehensive adoption. Sporadic tagging leads to customers remaining in lower event tiers, whereas skills automatically drive upsells.

-

Feature Flags (e.g., LaunchDarkly, Statsig): Best practice dictates that every new feature should be wrapped in a feature flag. In reality, friction often leads to only a fraction of features being flagged. A skill that enforces a "new feature equals flag by default" policy and understands an organization’s naming conventions not only improves adoption but also subtly shifts engineering behavior, making the correct approach the easiest one.

-

Authentication SDKs (e.g., Auth0, Descope): Every new route, API endpoint, or user-facing flow demands identity verification, correct token validation, session handling, and logout logic. Under velocity pressure, developers often shortcut these processes. A skill that enforces "every new endpoint validates identity before execution" and is familiar with the organization’s preferred SDK patterns transforms authentication from an inconsistently applied step into a universally enforced default.

-

Authorization SDKs (e.g., Styra, Permit.io, Oso): Authorization logic is notoriously one of the most inconsistently implemented patterns in any codebase. A skill that comprehends an organization’s permission model and automatically integrates authorization at every new endpoint simultaneously enhances security posture and SDK adoption.

-

Runtime Application Security (RASP) (e.g., Contrast Security, Arcjet): RASP tools face the same partial-coverage trap, but with more severe consequences. A missed instrumentation point can represent an unprotected attack surface rather than a mere reporting gap. A skill that enforces protection hooks at every new route by default transforms RASP from a partial perimeter into a true runtime fabric. In security, "the developer forgot" is an unacceptable failure mode.

-

Secrets Management (e.g., HashiCorp Vault, Hush): Each new database connection string, API key, or credential presents an opportunity for a developer to hardcode it rather than retrieve it from the organization’s secrets store. A skill that intercepts these moments by flagging

os.environ['STRIPE_KEY']and replacing it withhush.get('stripe_key')enforces hygiene precisely at the point of potential failure. Hardcoded secrets often appear as normal code during review, making them invisible to human oversight. An agent, however, can catch these critical oversights. -

Testing Frameworks (e.g., Playwright, Testcontainers, Cypress): While testing tools are not inherently difficult to install, maintaining comprehensive test coverage presents a persistent challenge. Coverage erodes not because developers undervalue tests, but because writing them is often the first task sacrificed under velocity pressure. A skill that generates opinionated, framework-consistent tests alongside every new function or component transforms the agent’s default output from merely providing a feature to delivering "here’s your feature and its tests."

-

Durable Workflow Engines (e.g., Orkes, DBOS, Temporal): These tools often come with steep learning curves, characterized by opinionated APIs, subtle correctness requirements around determinism, and specific patterns for handling retries and failures. A skill that encapsulates all this contextual knowledge ensures that the tenth developer interacting with the workflow layer adheres to the same patterns as the first.

Empirical Evidence of Agentic Impact

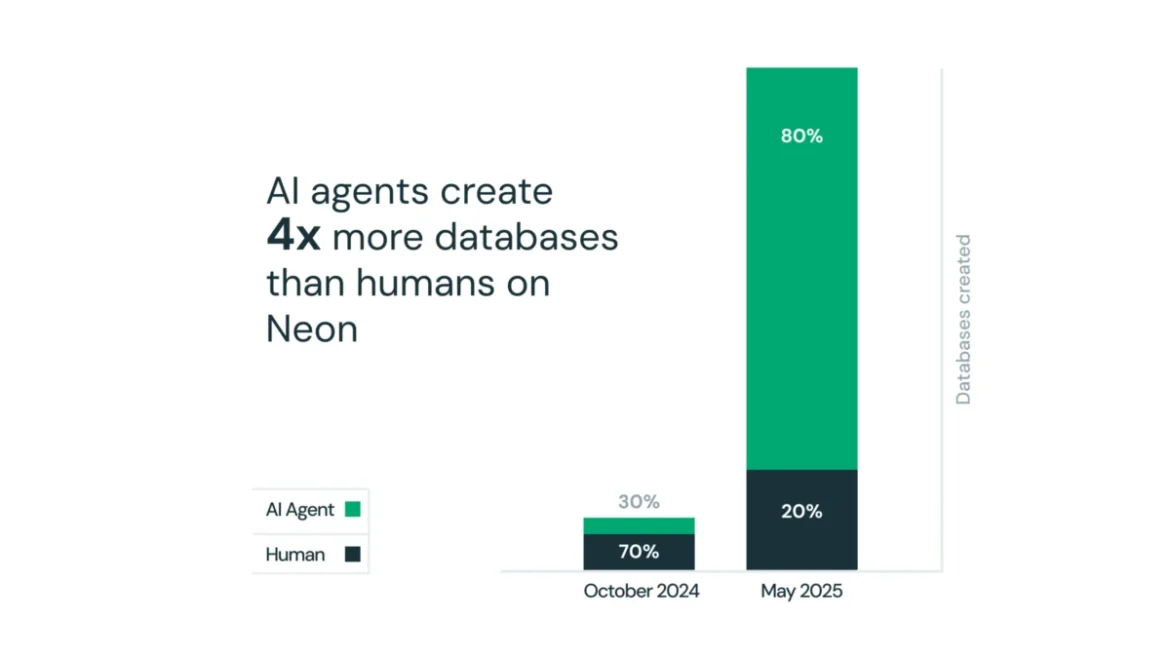

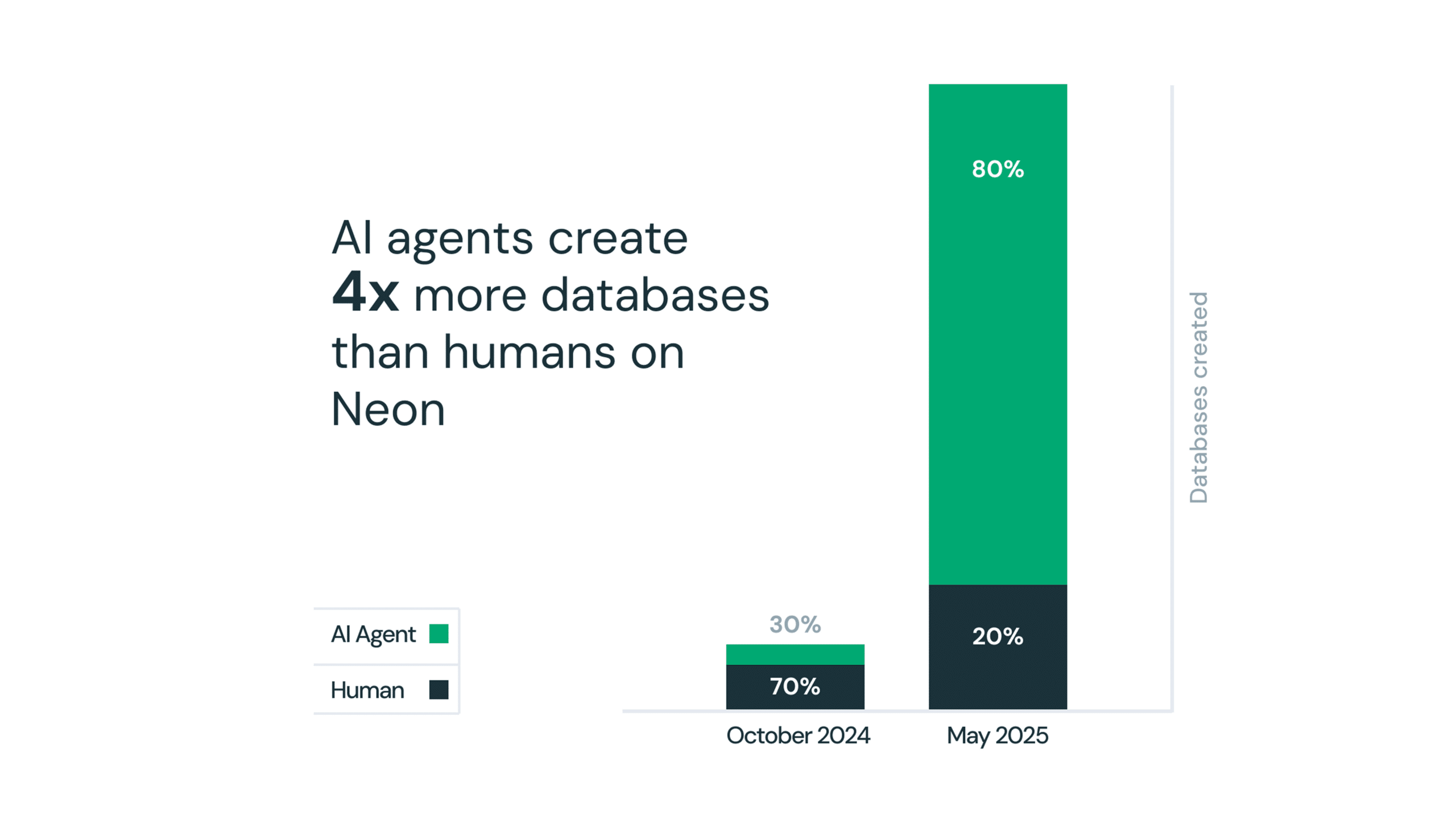

The transformative power of agentic development is already evident. Neon, the serverless PostgreSQL company, made a strategic investment in agent-native distribution by publishing AI rules, Claude Code plugins, Cursor integrations, and a comprehensive agent skills library on GitHub. The result was remarkable: over 80% of databases provisioned on Neon were created by AI agents, not humans. This statistic was so compelling that it became a cornerstone of Databricks’ rationale for acquiring Neon for $1 billion in 2025. Neon didn’t just build a database; it successfully integrated itself into the AI agent’s default workflow, demonstrating a distribution advantage that proved to be worth a billion-dollar exit.

The Strategic Imperative for Builders and Investors

Agent skills fundamentally shift the locus of competitive advantage. Today, the leaders in developer tools are often those who excel at initial installation—winning the "first five minutes." If agent skills become the dominant adoption surface, the competitive landscape will favor those who achieve the deepest and most accurate integration within agent contexts. This rewards a different set of capabilities: the quality and completeness of an organization’s skills, the trust developers place in their agent-layer recommendations, and the speed at which skills are updated as APIs evolve.

This shift has significant implications for investments in developer relations and documentation. The best SDK documentation has always served as a form of distribution, making it easier for developers to understand and correctly utilize a tool. Skills are, in essence, executable documentation. Companies that invest in building high-quality, opinionated, and well-maintained skills are proactively embedding their adoption potential into every agent-assisted developer workflow.

Furthermore, agent skills act as a powerful discovery channel within organizations. When a new developer joins a team and begins working with an AI coding agent, the agent doesn’t start from scratch. Instead, it suggests the tools and patterns already in use by the rest of the organization. Rather than the new developer independently searching for alternatives, the skill surfaces the existing organizational choice. This is how tools will spread virally within organizations in an agentic world—not through Slack recommendations or wiki pages, but through the context provided by the AI agent.

Implications for Founders and Investors

For founders building developer infrastructure, the agent skill is no longer an afterthought but a first-class product artifact. The critical question shifts from "how do we make the first install easy?" to "how do we make every subsequent instrumentation decision easy, for every developer, forever?" The answer lies in developing a skill that imbues the AI agent with an understanding of the API as profound as that of the company’s most experienced solutions engineer.

Investors evaluating developer tools companies must recognize that distribution moats are being fundamentally rebuilt. A company with a mediocre PLG motion but an exceptional skill embedded across enterprise accounts within every agent context may possess a stronger expansion engine than a competitor with a polished quickstart but a shallow presence in the agent layer. Key metrics are evolving; coverage depth within accounts, rather than just seat count, increasingly signals durable value.

Agent skills also address two coverage gaps that PLG historically failed to penetrate: retrofitting legacy codebases, where agents can systematically refactor existing uninstrumented code to meet current standards without requiring dedicated engineering sprints, and DevOps workflows. Infrastructure-as-code, CI/CD pipelines, and deployment scripts can all benefit from the same pattern-enforcement logic applied to application code.

The first wave of PLG focused on removing friction at the point of installation. The second wave is about removing friction at every commit, perpetually. Companies that strategically build for this second wave now will be exceptionally well-positioned in the coming years. The pertinent question for founders and investors alike is straightforward: What is your AI coding-agent skills strategy, and when does it launch?