Software engineering stands on the precipice of its third transformative era this century, a monumental shift driven by the burgeoning adoption of agentic artificial intelligence. This evolution follows two previous industry-defining movements: the pervasive rise of open source, which democratized code access, and the widespread embrace of DevOps and agile methodologies, which fostered collaborative development and continuous delivery. The integration of agentic AI promises to move beyond assisting individual coding tasks to autonomously managing entire software projects and product lifecycles, heralding an unprecedented level of automation.

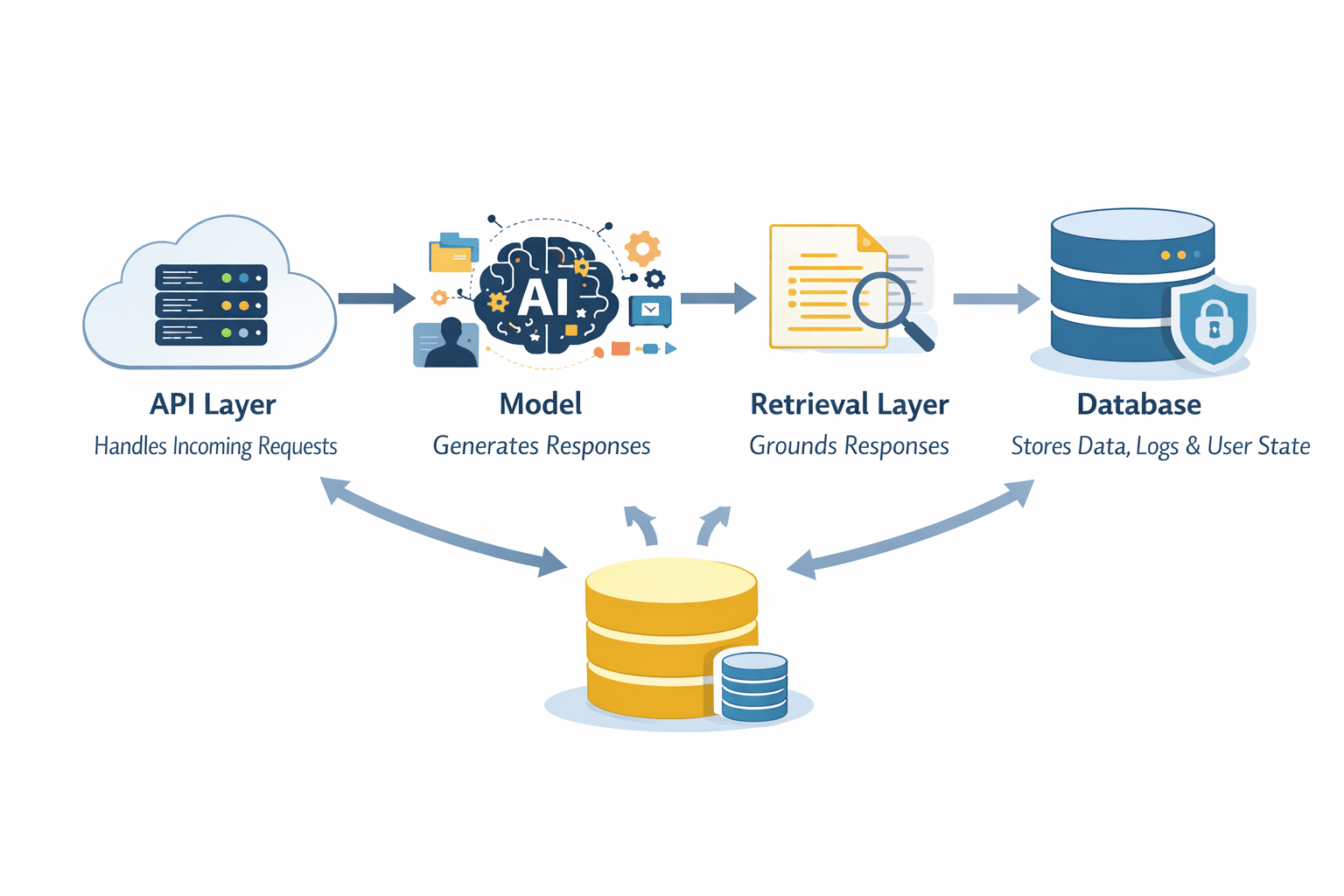

A recent report, based on a comprehensive survey of 300 engineering and technology executives, underscores this profound potential. While current applications of AI in software engineering are largely confined to augmenting specific, well-defined tasks like coding and testing, the advent of agentic capabilities transforms AI into reasoning, self-directing entities. These agents are anticipated to orchestrate complex software projects with a significant degree of autonomy, potentially revolutionizing the entire software development and product lifecycle from inception to deployment and ongoing management.

The Accelerating Trajectory of Agentic AI in Software Development

The survey data paints a compelling picture of rapidly building momentum. Currently, half of all organizations identify agentic AI as a top investment priority for their software engineering efforts. This figure is projected to skyrocket, with over four-fifths of companies expecting to prioritize it within the next two years. This heightened focus is directly translating into accelerated adoption rates. As of the survey’s findings, 51% of software teams are already utilizing agentic AI, albeit in predominantly limited capacities. Furthermore, a substantial 45% of teams have concrete plans to integrate agentic AI within the coming 12 months, signaling a widespread recognition of its strategic importance.

The path to realizing the full benefits of agentic AI in software engineering is acknowledged to be a journey, mirroring the organizational and process transformations that accompanied the adoption of DevOps and agile principles. While the immediate gains are expected to be incremental, with a majority of respondents anticipating slight (14%) to moderate (52%) improvements over the next two years, a significant minority (32%) hold higher expectations, and 9% believe the impact will be genuinely game-changing. This indicates a clear understanding that while immediate returns might be modest, the long-term transformative potential is immense.

Quantifying the Impact: Speed, Efficiency, and Lifecycle Automation

One of the most immediate and universally anticipated benefits of agentic AI is its impact on time-to-market. The survey revealed that an overwhelming 98% of respondents expect their teams’ software project delivery timelines, from pilot phase to production, to accelerate. The projected average increase in speed across all participating organizations is a remarkable 37%. This substantial acceleration suggests that agentic AI will empower organizations to bring innovative products and features to market far more rapidly, a critical competitive advantage in today’s fast-paced technological landscape.

Beyond mere speed, the ultimate ambition for most engineering teams lies in achieving full agentic lifecycle management. The survey indicates a strong desire for AI agents to autonomously manage both the product development lifecycle (PDLC) and the software development lifecycle (SDLC) end-to-end. At present, 41% of organizations aim to achieve this comprehensive management for most or all of their products within 18 months. This ambition is set to grow, with expectations that this figure will rise to 72% within two years, provided current trajectories and expectations are met. This vision of agent-managed development signifies a paradigm shift, where AI takes on a central, directive role in the entire creation and iteration process of software.

Navigating the Challenges: Compute Costs and Organizational Inertia

Despite the optimistic outlook, the path to full agentic AI integration is not without its hurdles. The survey identified compute costs and the complexities of integration with existing applications as the primary challenges faced by organizations, particularly in early-adopter sectors like media and entertainment and technology hardware. These technical barriers, while significant, are often compounded by deeper organizational and cultural challenges.

Interviews with industry experts accompanying the report highlighted the critical importance of change management. Shifting established workflows, retraining personnel, and fostering a culture that embraces AI-driven autonomy are identified as substantial, often underestimated, obstacles. Just as the widespread adoption of DevOps required a fundamental rethinking of team structures and communication, so too will the integration of agentic AI necessitate a deliberate and strategic approach to organizational transformation. The potential gains in speed, efficiency, and quality are substantial, promising to outweigh the inevitable difficulties associated with such profound change.

Historical Context: The Evolution of Software Engineering Paradigms

To fully appreciate the significance of agentic AI, it is crucial to contextualize it within the historical evolution of software engineering.

The Open Source Revolution (Early 2000s onwards): The early 21st century witnessed the profound impact of the open source movement. Projects like Linux, Apache, and the proliferation of repositories like GitHub made source code readily available, fostering collaboration and innovation on an unprecedented scale. This democratized access to tools and frameworks, enabling developers worldwide to build upon existing work, accelerating development cycles, and reducing barriers to entry. This shift moved software development from a more proprietary and siloed endeavor to a collaborative and community-driven ecosystem.

The Agile and DevOps Transformation (Mid-2000s onwards): Building on the foundation of open source, agile methodologies (Scrum, Kanban) emerged to address the inherent inflexibility of traditional waterfall development. Agile emphasized iterative development, customer feedback, and rapid adaptation to changing requirements. The concurrent rise of DevOps, a set of practices that aims to shorten the systems development life cycle and provide continuous delivery with high software quality, further revolutionized the industry. DevOps broke down the silos between development and operations teams, fostering collaboration, automating build and deployment processes, and enabling continuous integration and continuous delivery (CI/CD). This led to a significant increase in the speed and reliability of software releases.

The Dawn of Agentic AI (Present and Future): Agentic AI represents the next logical leap. While previous shifts focused on democratizing access to code and improving the development and delivery processes through human collaboration and automation, agentic AI aims to imbue the development process itself with intelligent, autonomous agents. These agents are not merely tools but active participants capable of reasoning, planning, and executing complex tasks with minimal human oversight. This promises to move beyond incremental improvements to a fundamental reimagining of how software is conceived, built, tested, deployed, and maintained.

Looking Ahead: Implications and Future Prospects

The widespread adoption of agentic AI in software engineering carries profound implications. Organizations that successfully navigate the technical and organizational challenges will likely experience:

- Exponential Increases in Productivity: By offloading complex and time-consuming tasks to AI agents, human engineers can focus on higher-level problem-solving, innovation, and strategic decision-making.

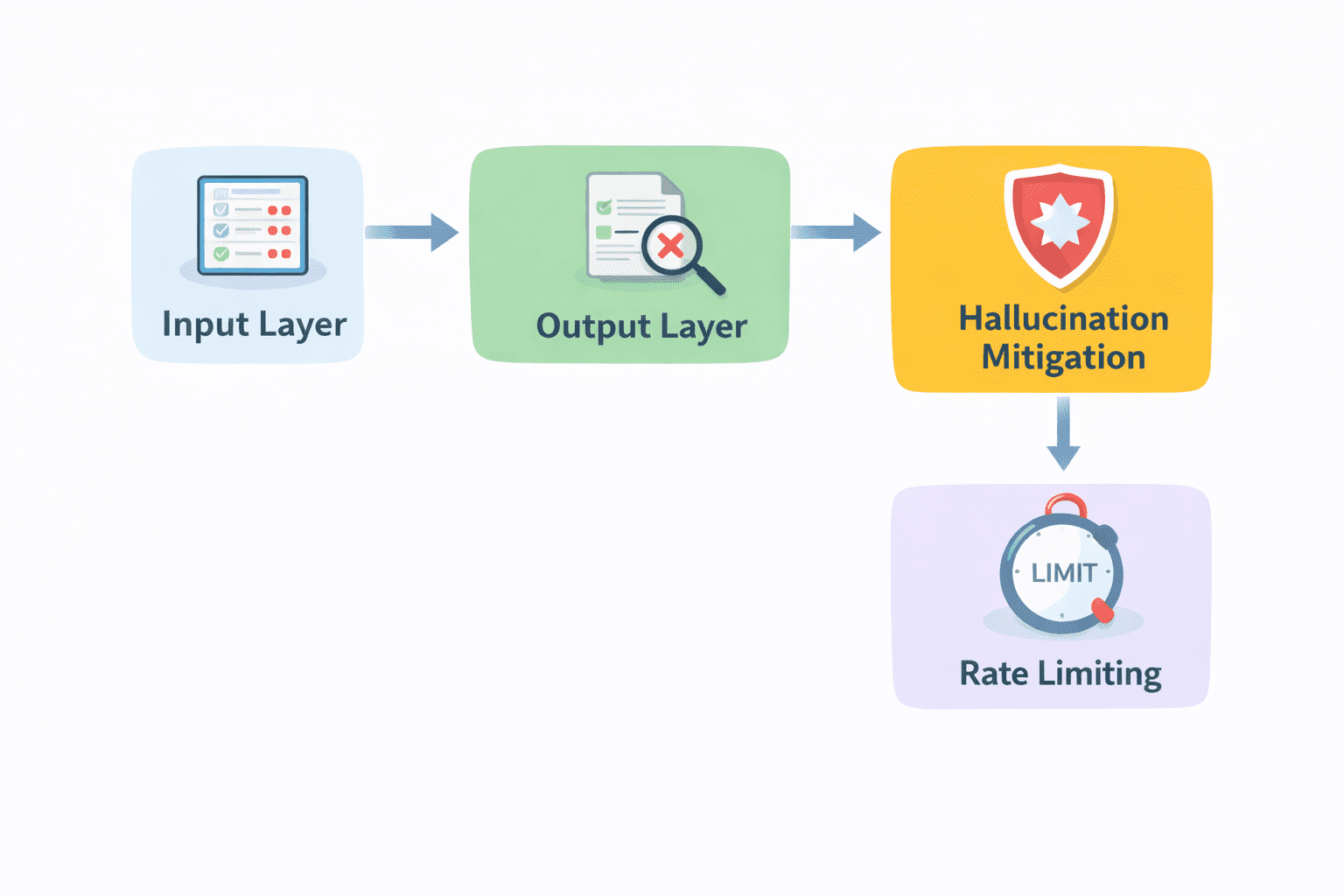

- Enhanced Software Quality: Agentic AI can meticulously analyze code for bugs, security vulnerabilities, and performance issues, potentially leading to more robust and reliable software.

- Democratization of Advanced Development: As agentic AI tools become more sophisticated and accessible, they could lower the barrier to entry for complex software development, empowering smaller teams or even individuals to undertake ambitious projects.

- Reshaping the Engineering Workforce: The role of the software engineer will likely evolve, with a greater emphasis on overseeing AI agents, defining strategic goals, and ensuring ethical AI deployment. Continuous learning and adaptation will be paramount.

- Accelerated Innovation Cycles: The ability to rapidly prototype, test, and deploy new features and products will fuel a faster pace of innovation across all industries.

The journey towards fully agent-managed development is likely to be a phased one. Initial implementations will focus on augmenting human capabilities, gradually transitioning to more autonomous operations as trust and proficiency grow. The report’s findings suggest a clear roadmap: building the foundational capabilities, addressing integration and cost challenges, and fostering the necessary organizational changes.

The third seismic shift in software engineering is not a distant theoretical concept but a tangible unfolding reality. As organizations increasingly embrace agentic AI, the landscape of software development is set to transform, promising unprecedented levels of efficiency, speed, and innovation. The success of this transition will hinge not only on technological advancements but also on the willingness of organizations to adapt their processes, cultures, and workforce strategies to harness the full power of intelligent, autonomous agents.

Source Report: This article is based on insights from a report titled "Agentic AI in Software Engineering" by MIT Technology Review Insights, in collaboration with SoftServe. The report surveyed 300 engineering and technology executives and included qualitative insights from expert interviews. The full report is available for download at https://bit.ly/3On1wP1.

Disclaimer: The content was produced by Insights, the custom content arm of MIT Technology Review. It was researched, designed, and written by human writers, editors, analysts, and illustrators. AI tools may have been used in secondary production processes but underwent thorough human review.