The journey from a perfectly functioning Large Language Model (LLM) prototype on a developer’s machine to a robust, scalable, and reliable production system is fraught with challenges. While the initial development phase often focuses on model performance and prompt engineering, the true test lies in how these sophisticated AI systems behave when exposed to the unpredictable realities of real-world usage. This article outlines a practical, seven-step framework for navigating the complexities of LLM deployment, transforming promising models into dependable assets.

The common narrative of LLM development often begins with a sense of triumph. A model, meticulously fine-tuned or prompted, delivers impressive results in a controlled environment. Responses are swift, accuracy is high, and the user experience feels seamless. However, this illusion of perfection frequently shatters upon deployment. Latency creeps in, operational costs escalate, and users invariably present scenarios the development team hadn’t anticipated. The model, once a star performer, begins to falter, generating answers that, while superficially plausible, can disrupt critical workflows. This divergence between simulated success and real-world performance is a significant hurdle, highlighting that the core challenge is not merely building an LLM, but engineering it for reliability, scalability, and practical usability in a production setting. Deployment transcends simple API calls or model hosting; it necessitates strategic decisions regarding architecture, cost management, latency optimization, safety protocols, and continuous monitoring. Overlooking these aspects can lead to systems that, while functional initially, are prone to quiet failure over time. Many teams, captivated by the allure of advanced prompts and raw model capabilities, underestimate the chasm between a working prototype and a production-ready system, dedicating insufficient resources to understanding the dynamics of real user interaction.

Step 1: Crystallizing the Use Case

The foundation of a successful LLM deployment is laid long before any code is written, with the precise definition of the intended use case. Vague objectives inevitably lead to an over-engineered system in some areas and critical oversights in others. Clarity here means dissecting the problem into manageable, specific tasks. Instead of a generic "build a chatbot," a more effective approach defines its precise function: will it answer frequently asked questions, manage customer support tickets, or guide users through a complex product onboarding process? Each of these scenarios demands a distinct architectural and functional design.

Equally crucial is defining clear input and output expectations. What forms of data will users provide? What is the desired output format – unstructured text, structured JSON, or a specific hierarchical data representation? These specifications directly influence prompt design, the implementation of validation layers, and the user interface. Furthermore, establishing measurable success metrics is paramount. Without them, evaluating the system’s effectiveness becomes subjective. These metrics could encompass response accuracy, task completion rates, operational latency, or user satisfaction scores. The more precise the metric, the more informed the trade-offs made during development and deployment become. For instance, a general-purpose chatbot presents broad and unpredictable challenges. In contrast, a structured data extractor, with its clearly defined inputs and outputs, is inherently easier to test, optimize, and deploy reliably. The principle is straightforward: specificity in use case definition simplifies every subsequent stage of the deployment process.

Step 2: Strategic Model Selection, Not Necessarily the Largest

With a well-defined use case, the next critical juncture is selecting the appropriate language model. The temptation to default to the most powerful, state-of-the-art model available is understandable, as larger models often exhibit superior performance on benchmark tests. However, in a production environment, raw performance is only one facet of a multifaceted decision.

Cost is frequently the primary limiting factor. Larger models incur significantly higher operational expenses, particularly at scale. What may seem manageable during limited testing can quickly become an unsustainable expenditure under substantial real-world traffic. For example, running a large, proprietary LLM for millions of queries daily can easily run into hundreds of thousands or even millions of dollars per month, a figure that necessitates careful financial planning.

Latency is another critical consideration. Larger models typically require more computational resources, leading to longer response times. For applications directly interacting with users, even minor delays can degrade the perceived performance and user experience. While accuracy remains important, it must be contextualized. A slightly less powerful model that excels at the specific task at hand might be a more pragmatic choice than a larger, more general-purpose model that incurs higher costs and slower response times.

The choice between using hosted APIs from providers like OpenAI, Google, or Anthropic, versus deploying open-source models such as those from Meta’s Llama series or Mistral AI, also presents a significant decision. Hosted APIs offer ease of integration and reduced infrastructure management overhead but come with a trade-off in terms of control and potentially higher per-token costs at extreme scale. Open-source models provide greater flexibility, customization, and the potential for lower long-term operational costs, but they demand substantial investment in infrastructure, expertise, and ongoing maintenance. The optimal choice is rarely the largest model, but rather the one that strikes the most effective balance between the specific use case requirements, budgetary constraints, and desired performance benchmarks.

Step 3: Architecting for Scalability and Reliability

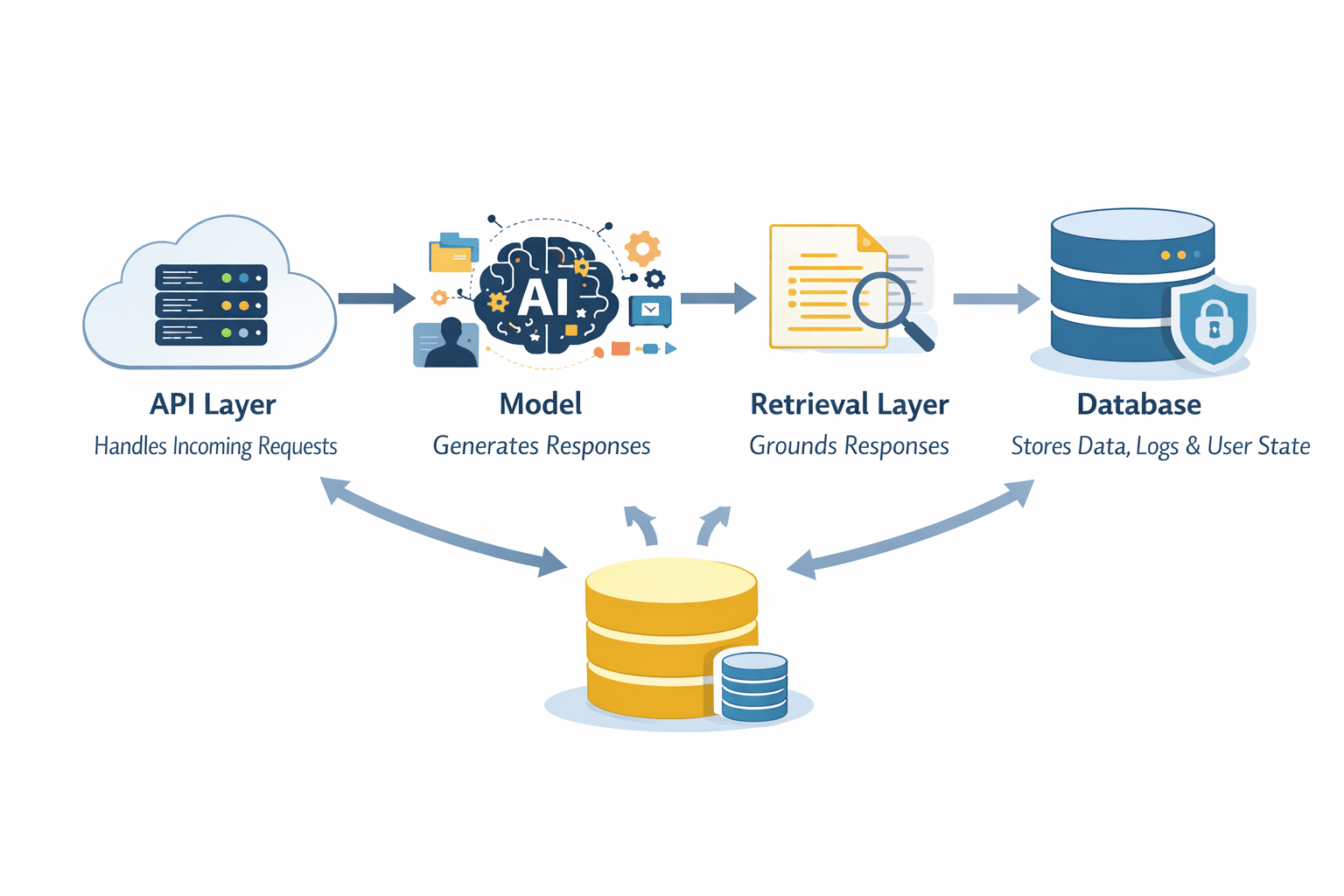

As LLM applications move beyond simple prototypes, the model itself transforms from the sole protagonist into a component within a more expansive system architecture. LLMs rarely operate in isolation in production environments. A typical robust setup incorporates multiple layers, including an API layer to manage incoming requests, the LLM for content generation, a retrieval layer to ground responses in factual data, and a database for storing information, logs, and user-specific states. Each element is designed to contribute to the overall reliability and scalability of the system.

The API layer serves as the system’s gateway. It orchestrates incoming requests, handles authentication and authorization, and intelligently routes inputs to the appropriate internal components. This layer is also where critical control mechanisms are implemented, such as rate limiting, input validation, and access control, ensuring the system is protected from misuse and overload.

The model occupies a central position, but its responsibilities can be augmented. Retrieval-Augmented Generation (RAG) systems, for instance, can furnish the LLM with relevant context drawn from external data sources. This significantly reduces the incidence of hallucinations and enhances the factual accuracy of the generated output. Databases are indispensable for storing structured data, user interaction histories, and system outputs, enabling efficient data retrieval for future use and analysis.

A fundamental architectural decision revolves around whether the system will be stateless or stateful. Stateless systems process each request independently, which simplifies scaling operations as there is no need to manage session data across multiple instances. Stateful systems, conversely, maintain context across interactions, potentially leading to a more fluid user experience. However, this statefulness introduces considerable complexity in data storage, retrieval, and synchronization mechanisms, demanding careful architectural planning.

Conceptualizing the system as a series of pipelines can be highly beneficial. Rather than a monolithic step that generates an answer, a well-designed pipeline involves a sequential flow: input reception, validation, contextual enrichment, model processing, and output handling. Each stage within this pipeline is designed to be independently controllable and observable, facilitating debugging and optimization.

Step 4: Implementing Robust Guardrails and Safety Layers

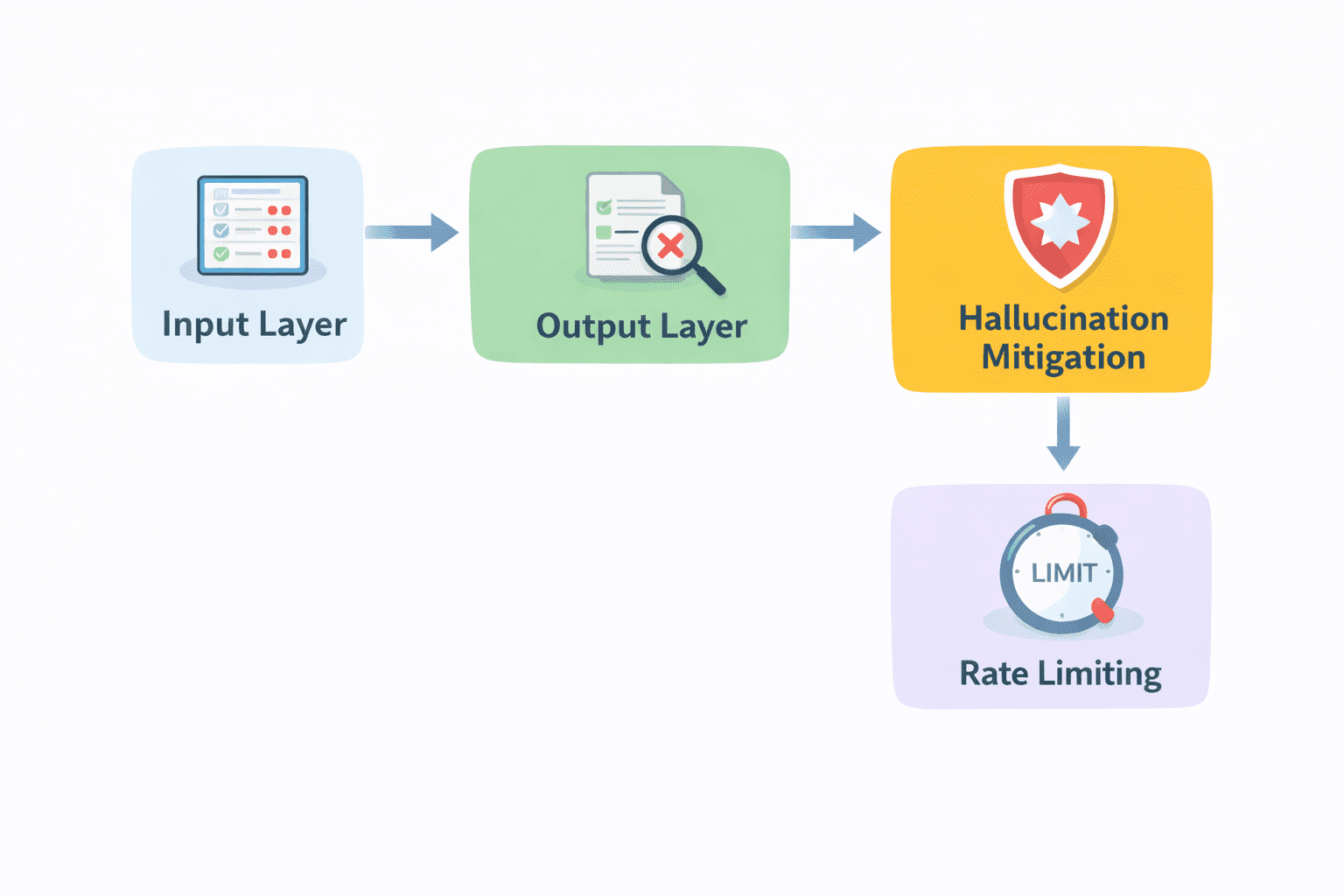

Even with a sophisticated architecture and a well-chosen model, raw LLM output should never be directly exposed to end-users without stringent oversight. Language models, while incredibly powerful, lack inherent safety mechanisms or a perfect understanding of ethical boundaries. Without proper constraints, they can inadvertently generate responses that are factually incorrect, irrelevant to the user’s query, or even harmful and offensive.

Guardrails are the essential protective barriers that mitigate these risks. These are not merely optional additions but fundamental requirements for responsible LLM deployment. They encompass a range of techniques designed to steer the model’s output within acceptable parameters. This can include:

- Content Moderation: Employing filters to detect and block profanity, hate speech, or personally identifiable information (PII) in both user inputs and model outputs.

- Fact-Checking and Grounding: Integrating mechanisms that verify generated statements against trusted knowledge bases, especially for applications requiring high factual accuracy.

- Tone and Style Enforcement: Ensuring the model adheres to a predefined brand voice or communication style, preventing inappropriate or off-brand messaging.

- Task-Specific Constraints: For applications with defined goals, such as booking appointments or retrieving specific data, guardrails ensure the model stays within the bounds of its intended function and does not attempt to perform actions outside its scope.

- Prompt Injection Defenses: Implementing safeguards against malicious attempts to manipulate the LLM’s behavior through crafted inputs.

Without these protective layers, even a highly capable model can produce outputs that erode user trust or introduce significant risks. The strategic implementation of guardrails transforms raw, potentially unpredictable generation into a controlled and reliable system. For example, a customer service bot that is programmed to avoid discussing sensitive financial details or making medical diagnoses relies heavily on these safety mechanisms to maintain user trust and comply with regulations.

Step 5: Optimizing for Performance: Latency and Cost Synergy

Once an LLM system is operational, performance metrics like response time and operational cost cease to be mere technical details and evolve into critical user-facing considerations. Sluggish responses can quickly lead to user frustration and abandonment, while escalating costs can severely limit the system’s scalability and long-term viability. Addressing these twin challenges requires a multifaceted approach focused on efficiency.

Caching stands out as one of the most straightforward yet effective methods for enhancing both speed and cost-efficiency. If users frequently ask similar questions or trigger comparable workflows, there is no need to generate a fresh response from the LLM every single time. Storing and intelligently reusing previously generated results can dramatically reduce both latency and computational expenditure. For instance, caching answers to common FAQs can significantly offload the LLM.

Streaming responses is another technique that dramatically improves perceived performance. Instead of forcing users to wait for the entire output to be generated, presenting results as they are produced allows users to begin engaging with the information sooner. While the total processing time might remain the same, the user experience feels substantially faster.

A practical strategy involves dynamic model selection. Not every user query or task necessitates the deployment of the most powerful and expensive LLM. Simpler requests can be efficiently handled by smaller, more cost-effective models, while more complex or critical tasks can be intelligently routed to stronger, albeit more resource-intensive, models. This intelligent routing mechanism helps maintain operational costs within budget without compromising quality where it is most needed.

For systems designed to handle multiple requests concurrently, batching can yield significant efficiency gains. By grouping individual requests and processing them together, systems can reduce overhead and improve overall throughput.

The overarching principle guiding these optimization efforts is the pursuit of balance. The goal is not to achieve peak speed or minimal cost in isolation, but rather to find an equilibrium where the system remains responsive and provides an excellent user experience while simultaneously remaining economically sustainable. This often involves a continuous process of performance analysis and iterative refinement.

Step 6: Establishing Comprehensive Monitoring and Logging Frameworks

The transition to a live production environment underscores the absolute necessity of comprehensive visibility into system operations. Without robust monitoring and logging, managing an LLM system becomes akin to navigating blindfolded. The foundational element of this visibility is logging. Every incoming request, every generated response, and every intermediate step within the processing pipeline must be meticulously recorded. This detailed log data is indispensable for understanding system behavior, diagnosing issues, and reconstructing events when problems arise.

Error tracking builds upon this logging foundation. Instead of manually sifting through vast quantities of log data, automated systems should be in place to surface failures promptly. This includes identifying timeouts, invalid outputs, unexpected exceptions, or deviations from predicted behavior. Early detection of such errors is crucial for preventing minor glitches from escalating into major system disruptions.

Performance metrics are equally vital. Key performance indicators (KPIs) such as average response times, request success rates, error frequencies, and the identification of potential bottlenecks must be continuously tracked. These metrics provide objective data that highlights areas requiring optimization or immediate attention. For instance, a sudden spike in latency for a specific type of query could indicate a need to re-evaluate the model or the retrieval mechanism for that particular use case.

Finally, incorporating user feedback mechanisms adds another critical layer of insight. From a purely technical standpoint, a system might appear to be functioning correctly, yet still produce suboptimal results from the user’s perspective. Explicit feedback, such as user ratings or direct comments, and implicit signals, such as user engagement patterns or task completion rates, offer invaluable perspectives on the system’s real-world effectiveness. These diverse data streams—logs, errors, performance metrics, and user feedback—collectively paint a comprehensive picture of the system’s health and user experience.

Step 7: Embracing Iteration Through Real-World User Feedback

It is imperative to recognize that deploying an LLM system is not an endpoint but rather the commencement of a continuous improvement cycle. Regardless of the meticulousness of the design and testing phases, real users will invariably interact with the system in ways that were unforeseen. They will pose novel questions, submit unconventional inputs, and push the system into edge cases that remained dormant during development.

This is precisely where the power of iteration becomes paramount. A/B testing offers a structured methodology for this process. Development teams can deploy different versions of prompts, model configurations, or system workflows to segments of the user base and rigorously compare their performance. This data-driven approach replaces guesswork with empirical evidence, revealing which variations yield superior outcomes.

Prompt iteration continues in this phase, but it is now grounded in actual usage patterns and identified failure modes. Instead of optimizing in isolation, prompts are refined based on observed interactions and the types of errors the system is producing. This iterative refinement extends to other components of the system as well. The quality of retrieved context in RAG systems, the efficacy of guardrails, and the logic governing dynamic model routing can all be progressively enhanced over time.

The most influential input for this iterative loop is user behavior. Analyzing what users click on, where they disengage from the system, the patterns in their repeated queries, and the nature of their complaints provides profound insights into usability and effectiveness. These behavioral signals often reveal subtle problems that might elude purely quantitative metrics. Over time, this creates a virtuous cycle: users interact with the system, the system gathers valuable behavioral data, and this data directly informs and drives improvements, making the system increasingly aligned with the nuances of real-world usage.

Conclusion: Building Resilient LLM Systems

The successful deployment of language models transcends mere technical execution; it is fundamentally a design challenge. While the LLM itself is a critical component, its ultimate success hinges on the synergy and robustness of the surrounding ecosystem. The architecture, the implementation of guardrails, the sophistication of the monitoring framework, and the commitment to an iterative improvement process collectively shape the reliability and efficacy of the final system.

Production-ready LLM deployments prioritize reliability above all else. They are engineered to perform consistently across a spectrum of conditions, ensuring predictable behavior even under stress. Crucially, these systems are built with scalability in mind, capable of handling escalating user demand without compromising performance or stability. Furthermore, they are designed for continuous enhancement, leveraging feedback loops and iterative refinement to adapt and improve over time. It is this holistic approach, addressing the interplay of technology, user experience, and operational management, that distinguishes truly resilient and impactful LLM systems from their fragile counterparts.